Learning Timescales in Gated and Adaptive Continuous Time Recurrent Neural Networks

Stefan Heinrich, Tayfun Alpay, Yukie Nagai

Conference: Proceedings of the 2020 IEEE International Conference on Systems, Man, and Cybernetics, pp. 2662-2667, Toronto, CA, Oct 2020

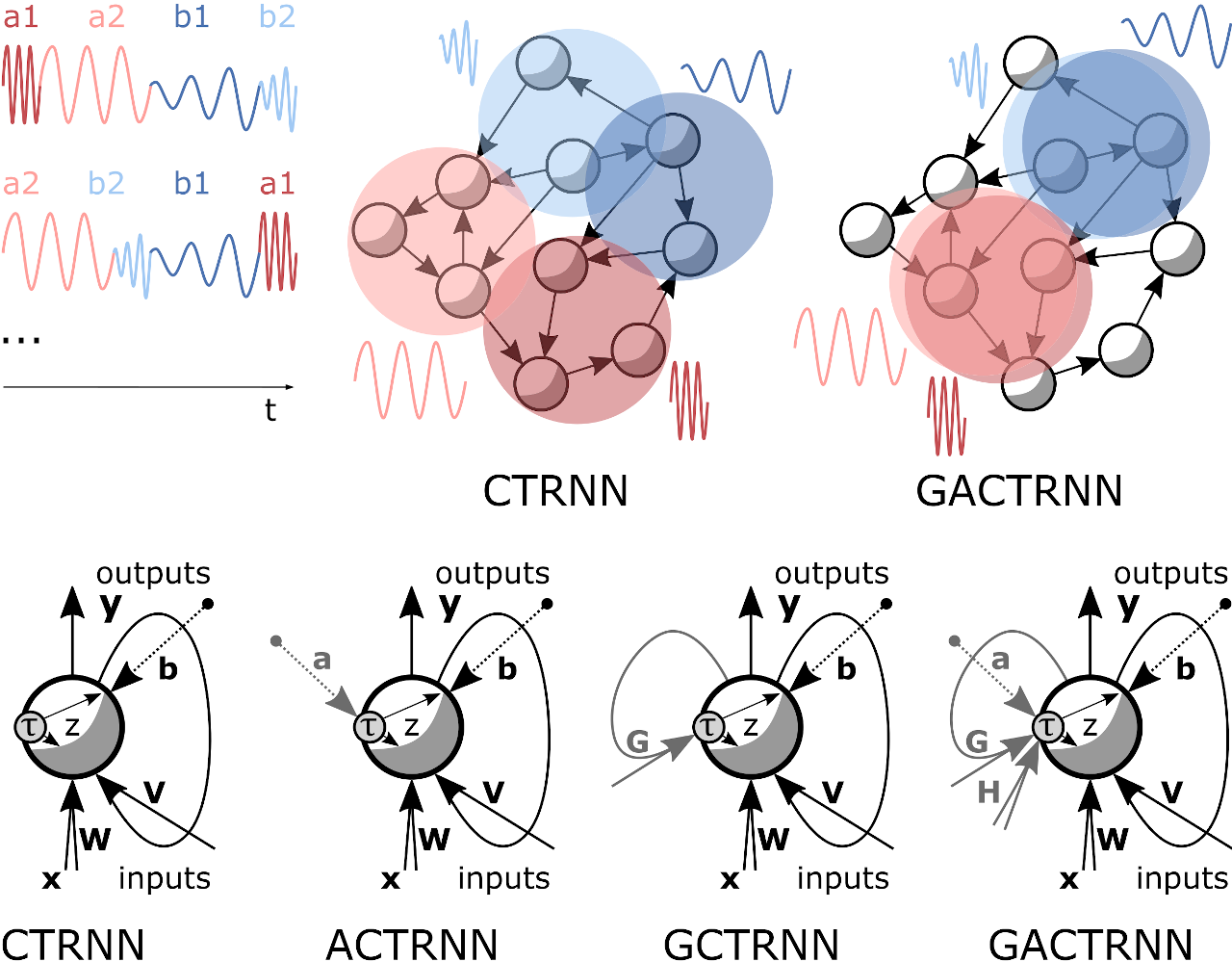

Abstract: Recurrent neural networks that can capture temporal characteristics on multiple timescales are a key architecture in machine learning solutions as well as in neurocognitive models. A crucial open question is how these architectures can adopt both multi-term dependencies and systematic fluctuations from the data or from sensory input, similar to the adaptation and abstraction capabilities of the human brain. In this paper, we propose an extension of the classic Continuous Time Recurrent Neural Network (CTRNN) by allowing it to learn to gate its timescale characteristic during activation and thus dynamically change the timescales in processing sequences. This mechanism is simple but bio-plausible as it is motivated by the modulation of oscillation modes between neural populations. We test how the novel Gating Adaptive CTRNNs can solve difficult synthetic sequence prediction problems and explore the development of the timescale characteristics as well as the interplay of multiple timescales. As a particularly interesting finding, we report that timescale distributions emerge, which simultaneously capture systematic patterns as well as spontaneous fluctuations. Our extended architecture is interesting for cognitive models that aim to investigate the development of specific timescale characteristic under temporally complex perception and action, and vice versa.