Towards Robust Speech Recognition for Human-Robot Interaction

Stefan Heinrich, Stefan Wermter

Conference: Proceedings of the IROS2011 Workshop on Cognitive Neuroscience Robotics (CNR), pp. 29-34, Sep 2011

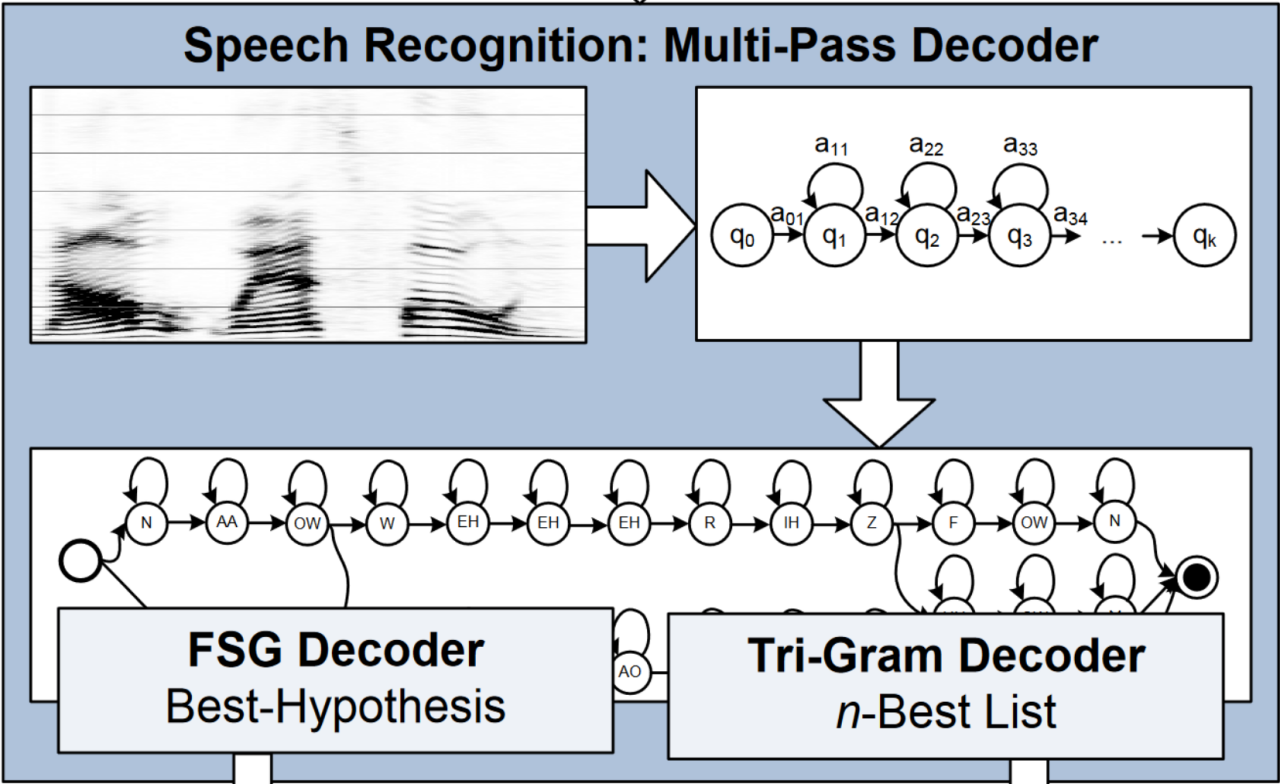

Abstract: Robust speech recognition under noisy conditions like in human-robot interaction (HRI) in a natural environment often can only be achieved by relying on a headset and restricting the available set of utterances or the set of different speakers. Current automatic speech recognition (ASR) systems are commonly based on finite-state grammars (FSG) or statistical language models like Tri-grams, which achieve good recognition rates but have specific limitations such as a high rate of false positives or insufficient rates for the sentence accuracy. In this paper we present an investigation of comparing different forms of spoken human-robot interaction including a ceiling boundary microphone and microphones of the humanoid robot NAO with a headset. We describe and evaluate an ASR system using a multipass decoder–which combines the advantages of an FSG and a Tri-gram decoder–and show its usefulness in HRI.